Students live stream Hampshire Senior Cup Finals to thousands of fans

Solent students helped to showcase two local cup finals

1 May 2026

Solent students helped to showcase two local cup finals

1 May 2026

Winners of a college competition celebrating emerging artistic talent from across the south of England announced

28 April 2026

Students partner with NHS to help start important health conversations among young people across Hampshire

28 April 2026

Solent partners with Go! Southampton to deliver a new high street business improvement plan

23 April 2026

Rankin recently gave Solent students insight into his work and his career path

23 April 2026

Law student has sights firmly set on success after winning silver at British Championship

22 April 2026

BA (Hons) Fine Art alumni will take over Guildhall Square to celebrate annual shipping container exhibition's tenth anniversary.

20 April 2026

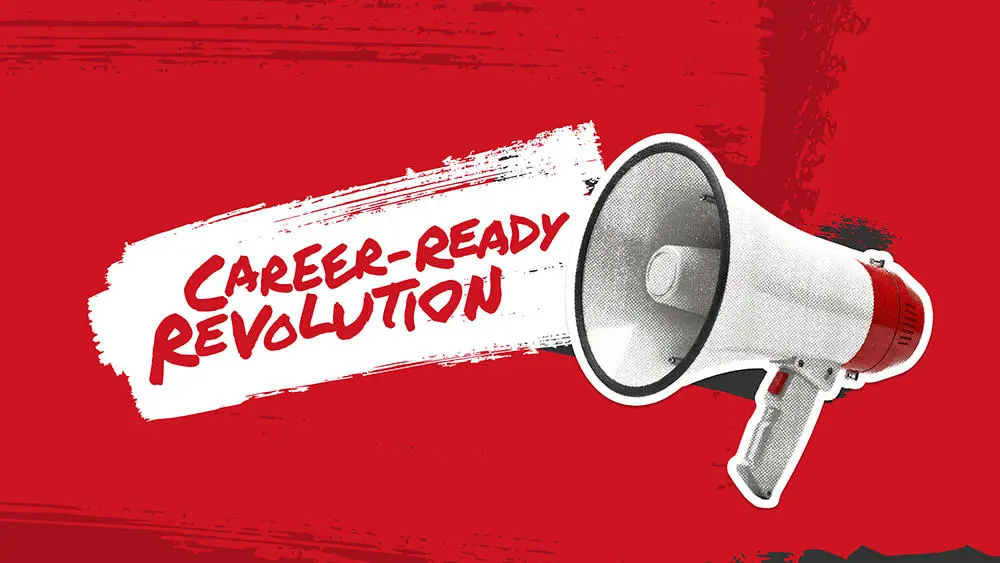

Solent's new campaign is set to redefine how the university promotes itself to prospective students

17 April 2026

Details of the pre-action process being taken by nine universities.

16 April 2026

Maritime Solent signs Civic Charter, strengthening ties between the region’s education, business and community sectors.

15 April 2026

How one Solent grad is spreading the beautiful game stateside ahead of the World Cup

8 April 2026

Experience life as a student at Solent at one of our on-campus or virtual undergraduate, postgraduate or maritime open days.

Find out more

Athletes across the University are set to take part in the week long event kicking off on 15 April

7 April 2026

Solent University celebrates student success at RTS Student Awards 2026.

1 April 2026

Ahead of Parallel Music Conference 2026, meet the two founders and Solent alumni who brought the idea together

1 April 2026

Solent Growth Partnership signs up to University's Civic Charter

31 March 2026