A student’s cultural tribute in 3D transforms Bulgarian folklore into a fantasy champion

A Southampton Solent University CGI Visual Effects student has embraced her Bulgarian heritage in her Final Major Project

5 June 2026

A Southampton Solent University CGI Visual Effects student has embraced her Bulgarian heritage in her Final Major Project

5 June 2026

Three Southampton Solent University graduates will play a central role in one of Southampton's biggest music moments this weekend.

4 June 2026

Students from across Southampton Solent University's fashion courses have been shortlisted for this year's Graduate Fashion Week awards

4 June 2026

One of the most recognisable images in digital history transformed into a large-scale, interactive sculpture

3 June 2026

An educational project exploring the science behind butterflies and moths, aimed at engaging teenagers and young adults.

2 June 2026

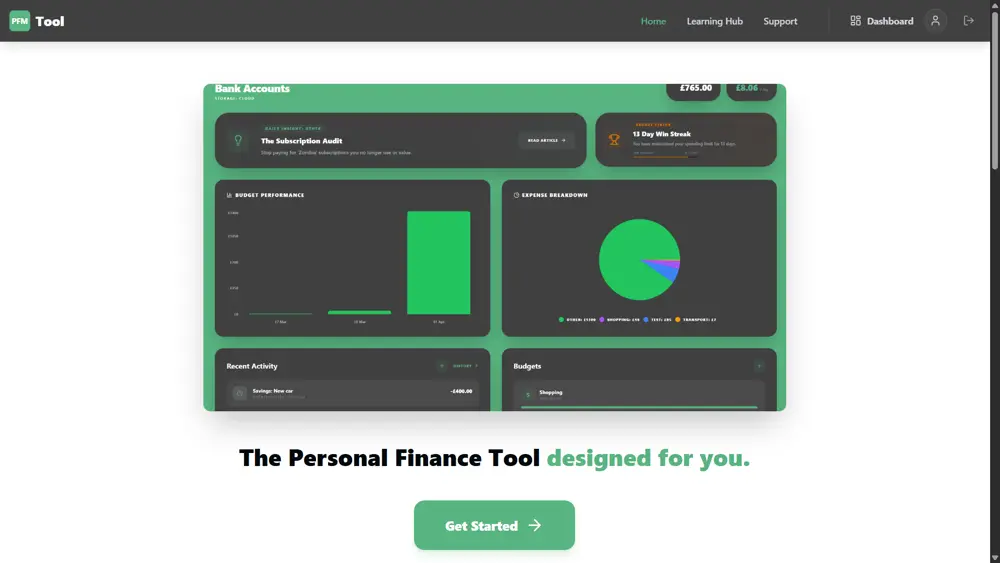

A Southampton Solent University Computer Science student has turned his passion for financial literacy into a fully functional app.

2 June 2026

A BA (Hons) Illustration student has taken a step toward his dream of becoming a tattoo artist by creating a hand-built portfolio.

1 June 2026

Southampton Solent University graduate has been honoured at the New Mayor's Ceremony, winning the Young Entrepreneur 2026 award

1 June 2026

From her studies at Southampton Solent University, Shivalika Puri has gone on to cover some of the world's biggest stories.

29 May 2026

Students presented their final projects to employers, industry advisory board members and sector specialists.

28 May 2026

Leo Wilkinson selected for prestigious Athena Pathway Youth Squad for the Youth America’s Cup

27 May 2026

Experience life as a student at Solent at one of our on-campus or virtual undergraduate, postgraduate or maritime open days.

Find out more

Solent University’s MSc Logistics Management course has been officially accredited by the Chartered Institute of Procurement and Supply

27 May 2026

A Southampton Solent University student has won a prestigious national award from the Football Writers’ Association (FWA).

21 May 2026

Solent recently welcomed a delegation of US visitors as part of the

19 May 2026

Solent graduate contributes to Gorillaz latest album campaign

14 May 2026